VMware vSphere 8 has numerous enhancements. Naturally, this is true for every major vSphere version. Let’s get right to it without wasting any more time.

vSphere Scaleability

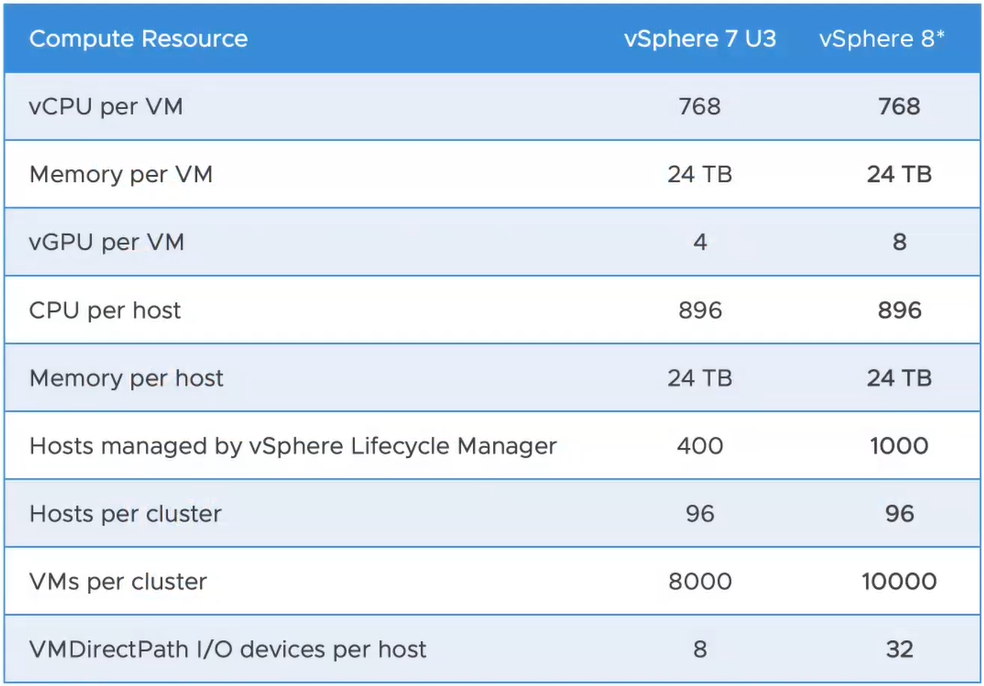

There are a few improvements to vSphere Scaleability

- vGPUs per VM changes from 4 to 8

- Lifecycle Manager can now manage 1000 hosts (up from 400)

- You can now manage 10000 VMs per cluster (was previously 8000)

- Each ESXi host can now have up to 32 VMDirectPath IO devices each

Please note that these are subject to change, the very latest information will be available via the VMware ConfigMax page

Resource Management Improvements

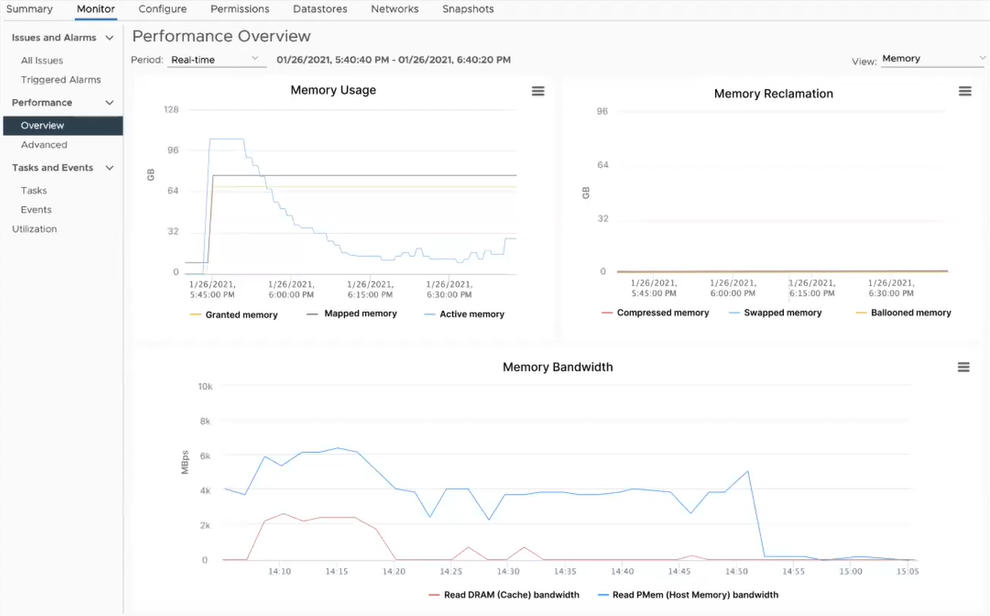

DRS and Persistent Memory

vSphere 8 allows DRS to better understand the memory of workloads (using persistent memory). This allows DRS to more intelligently place VMs onto hosts without affecting performance or resource utilization.

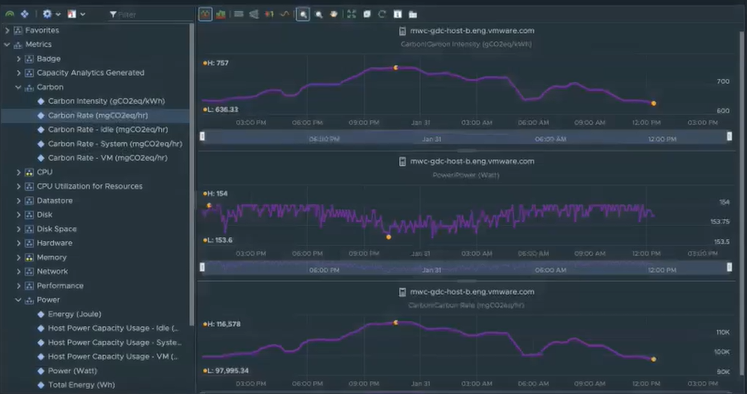

vSphere Green Metrics

In vSphere 8, there are a number of new “green” metrics which are exposed to vROps to help manage the energy usage and carbon emissions of the data center. These include

- Power.Capacity.UsageVm

- Power.Capacity.UsageSystem

- Power.Capacity.UsageIdle

You can see the Carbon and Power metrics in vROps below:

vSphere 8 Security

In vSphere 8 there are a number of important security improvements:

- Improvements to SGX

- vSphere 8 will only support TLS 1.2 and higher (No more legacy TLS version support)

- Untrusted binaries are prevented by default

- SSH can be automatically disabled after a specified timeout on ESXi hosts

Further enhancements to vSphere 8 security will be found on the vSphere 8 Security Configuration Guide shortly.

vSphere Lifecycle Management

Depreciation of Update Manager (VUM)

While still supported in vSphere 8, Update Manager (VUM) will no longer be supported in later versions of vSphere.

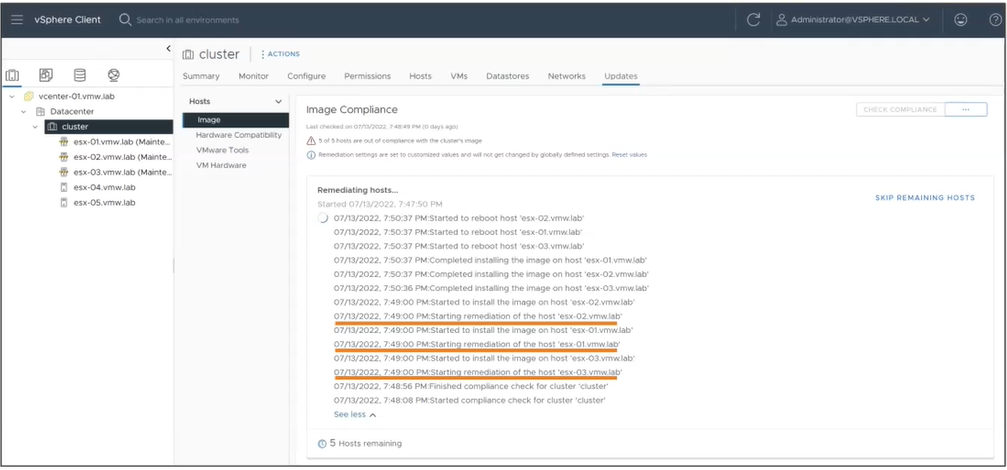

Staging Cluster Images

Just as with VUM baselines, it is now possible to stage cluster images to ESXi hosts ahead of remediation. This significantly speeds up the remediation time of ESXi hosts.

You can stage all hosts within a cluster with one click. Furthermore, the hosts do not need to be in maintenance mode to stage cluster images.

Parallel Remediation

Rather than updating one host at a time, it is now possible in vSphere 8 to remediate ESXi hosts in parallel. All hosts are put into maintenance mode by the administrator prior to remediation, then Lifecycle Manager will remediate all hosts in maintenance mode up to a configurable maximum (10 by default)

Enhanced Recovery of vCenter

If a v Center server is restored from backup, cluster configuration becomes reverted if there were many vCenter level changes in the meantime. As an example, if you add an ESXi host to a cluster and then restore the vCenter to a period of time before the host was added, this gets confusing.

With the new version of vCenter Server in vSphere 8, the cluster becomes the “source of truth”. In this example, the vCenter Server is restored and on boot, it queries the clusters for new (or absent) ESXi hosts. The cluster will then update the vCenter server with the real-time cluster status.

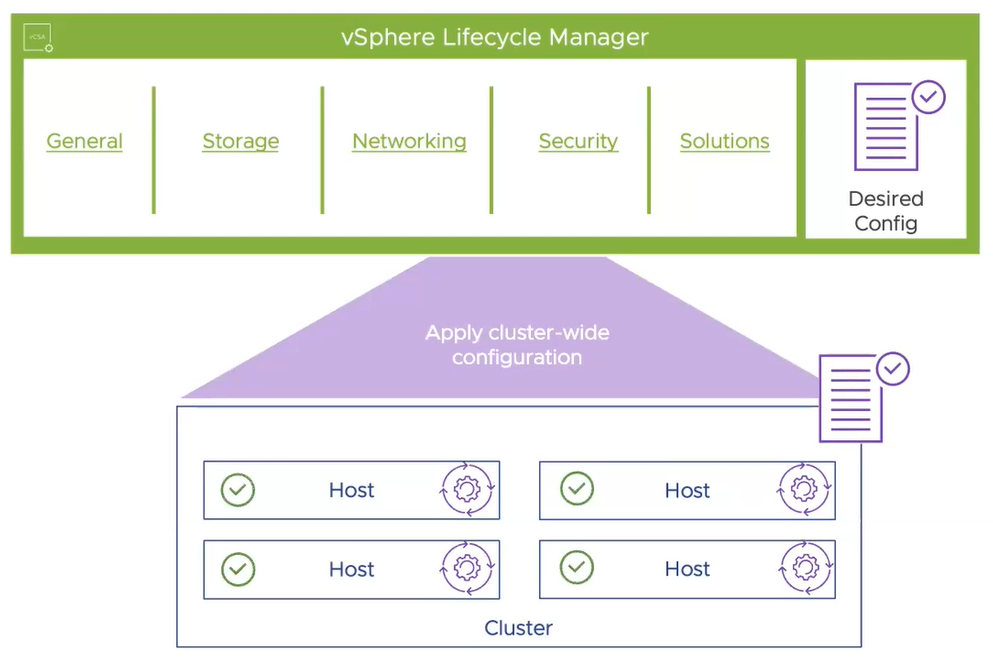

vSphere Configuration Profiles

Host profiles are still available in vSphere 8 but vSphere Configuration Profiles are being introduced as a “Tech Preview” feature in vSphere 8

Rather than specifying a profile level config and attaching it to objects such as an ESXi host, now we define the configuration on the cluster object and child hosts will obtain that configuration.

This is similar to how Cluster Images work in Lifecycle Manager and ensures a consistent configuration for a cluster’s hosts.

Further, this feature at GA will monitor compliance and report on configuration drift and allow for remediation back to the desired state if it were to drive away from the desired configuration.

This is a much more scalable option than available in the past.

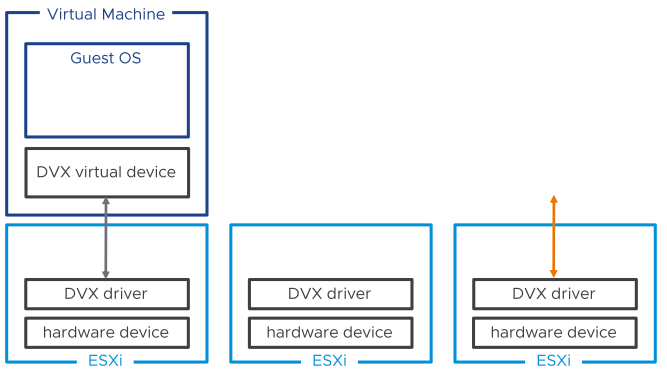

Device Virtualization Extensions

In the past, VMs using hardware directly via DirectPathIO for example were somewhat restricted.

DVX builds upon DirectPathIO, introducing an API for vendors to use, allowing them to create virtualization features including:

- Live Migration

- Suspend & Resume

- Disk and Memory snapshots

Essentially this means that VMs which use DirectPathIO in the future will be able to utilise more virtualization features (vMotion etc) instead of being tied to the underlying host.

Guest Operating Systems

Virtual Hardware Version 20

A new hardware version ships with vSphere 8. Hardware Version 20 includes the following:

Virtualization Hardware

- Support for the latest Intel and AMD CPUs

- Device Virtualization Extensions

- Support up to 32 DirectPathIO devices

Guest Services for Applications

- vSphere Datasets

- Application Aware Migrations

- Latest Guest OS Support

Performance and Scale

- Support for up to 8 vGPU devices

- Device Groups

- High Latency Sensitivity with Hyperthreading

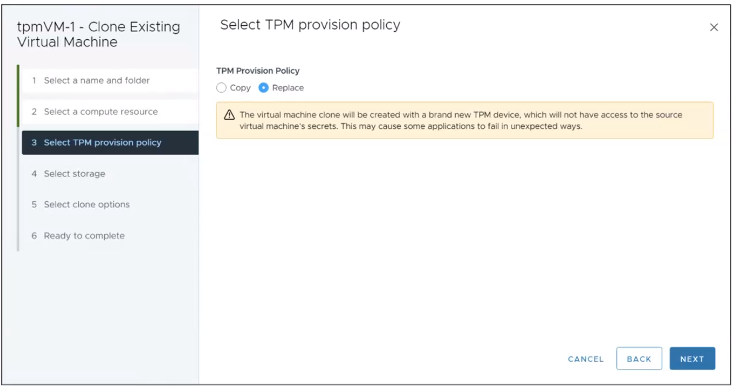

Virtual TPM Provisioning Policy

When copying virtual machines with vTPMs, there is a security issue created since many virtual machines will have the same secret as another virtual machine.

In vSphere 8, there is a TPM provisioning policy which asks you if you wish to clone or replace the vTPM device on a Virtual Machine when cloning a virtual machine.

The Clone option will clone the vTPM secrets, whereas the Replace option will reset the vTPM device as if it was a new one being added.

Migration aware applications

In the past, certain types of applications, especially time-sensitive, VOIP, or clustered applications did not work well with vMotion.

Applications can now be written to be vMotion aware. For example, applications can be written so that services are stopped before a vMotion starts and resumed again afterward. Alternatively, the application could fail over to another cluster member and delay the commencement of a vMotion operation up to a point.

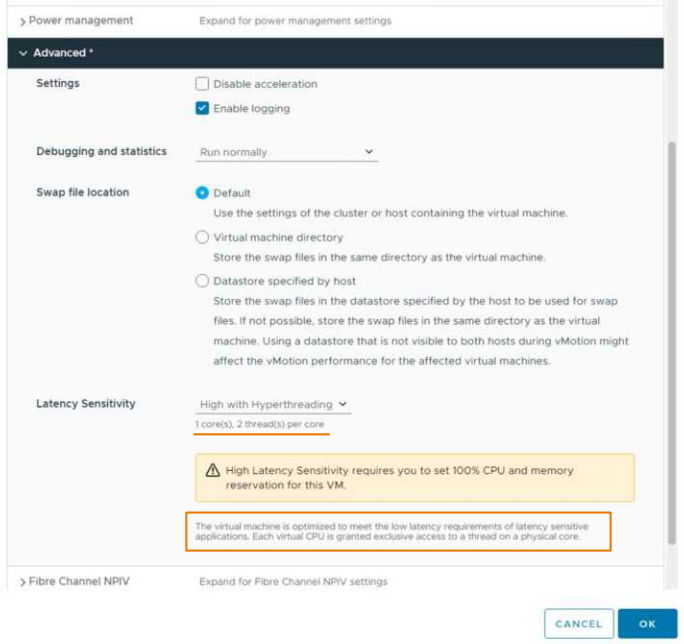

Latency Sensitive Applications

There have been latency sensitivity options for the past few major releases. In this release, there has been significant work done to improve applications sensitive to latency on the CPU side.

vCPUs are now scheduled on the same physical hyper-threaded core on the CPU (when enabled). HW version 20 is required for this and it can be easily configured via VM settings:

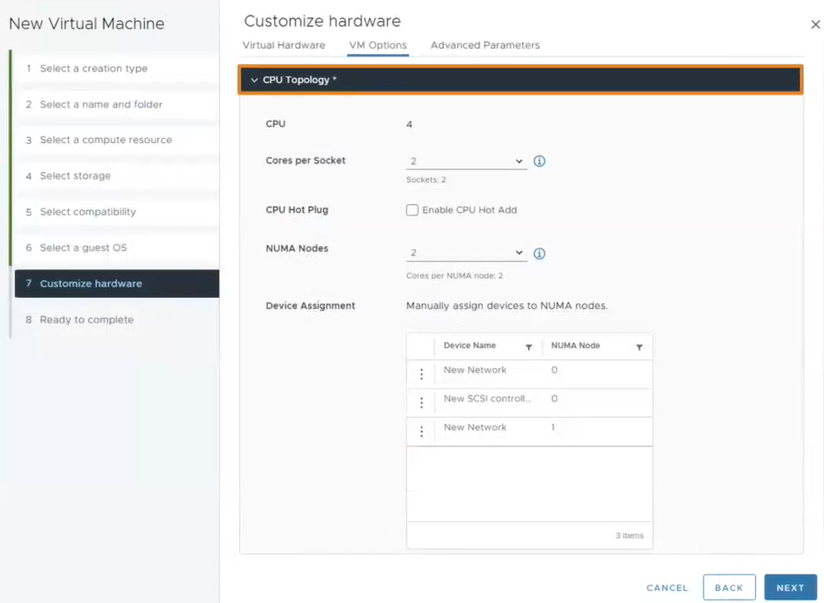

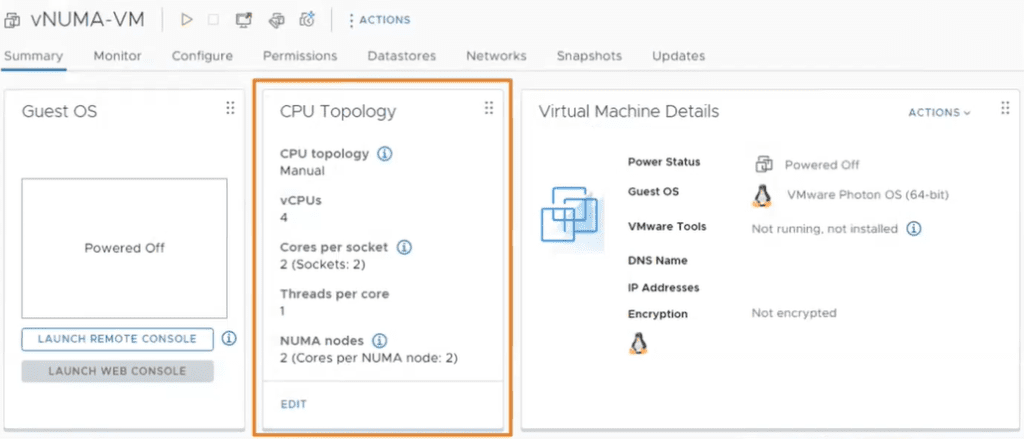

vNUMA Configuration and Visualization

vNUMA is far from a new vSphere concept but was configured via CLI and advanced settings making it complex to manage.

In vSphere 8, vNUMA-related options can be configured in the vSphere UI. There is also a useful vNUMA visualization directly in the vSphere UI. Hardware Version 20 is required for this feature.

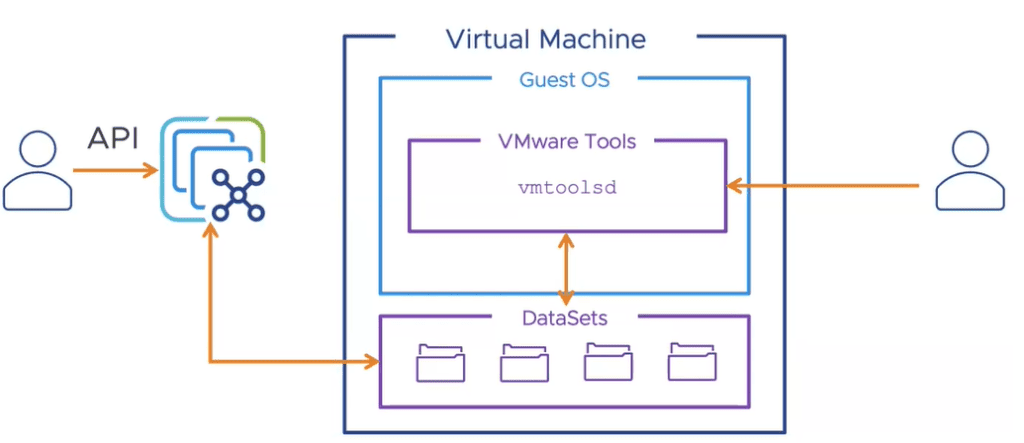

vSphere DataSets – Guest Data Sharing

This new feature allows you to create small data sets which you can share between vSphere and a Guest Operating System.

This is particularly useful in the following scenarios:

- Guest Deployment Status

- Guest Agent Configuration

- Guest Inventory Management

Again, hardware version 20 and VMware tools are required for Virtual Machines to use this feature.

VMware vSphere with Tanzu

vSphere with Tanzu was originally released with vSphere 7. In vSphere 8, Tanzu Kubernetes Grid 2.0 launches.

One TKG Runtime

Previously, Tanzu supported several vSphere variants, including TKGs (Supervisor and Guest clusters for vSphere only) and TKGm. This release consolidates these choices into a single TKG runtime that may be used anywhere.

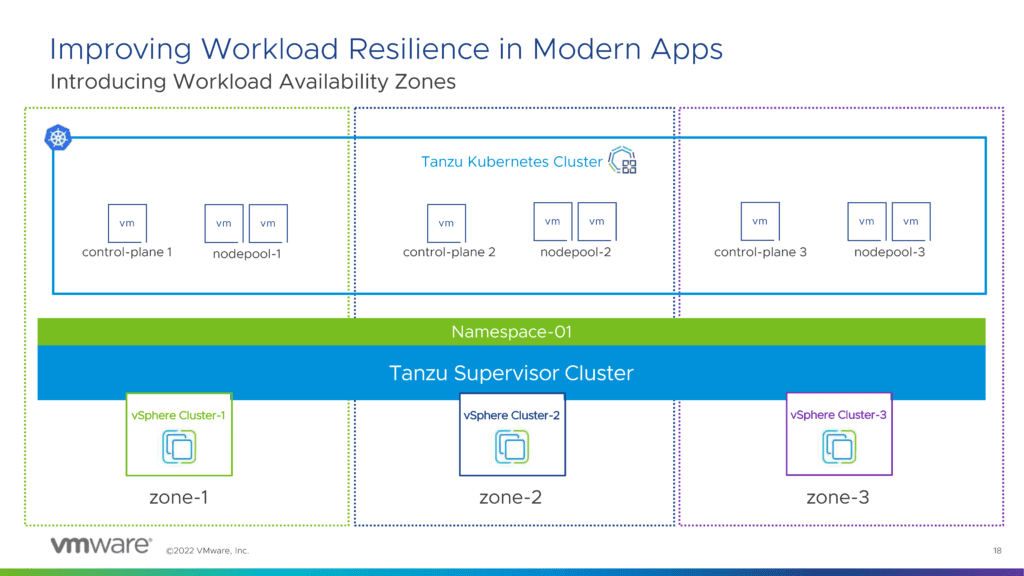

Workload Availability Zones

Workload Availability Zones is a new feature that lets you isolate workloads across several vSphere clusters. Kubernetes clusters such as Supervisor and Tanzu can then be deployed throughout these zones. This allows you to ensure that worker nodes are not in the same vSphere cluster as each other, improving availability.

Three Workload Availability Zones are currently required and once activated you will have a choice of using the traditional cluster method and to also use the new Availability Zones.

Initially, there will be a one-to-one relationship between a Workload Availability Zone and a vSphere cluster, with improvements coming soon.

Customization of PhotonOS and Ubuntu Images

Both PhotonOS and Ubuntu images can now be customized and stored within a ContentLibrary for ease of management.

Summary & vSphere 8 Download Links

There’s a lot to digest in this new version of vSphere 8, don’t forget to subscribe to the newsletter to keep up to date on other VMware updates such as those in the Storage (vSAN), Network, and Management spaces.

I provide a comprehensive “What’s New” guide on release day for many of the VMware solutions, so don’t miss out!

vSphere 8 is now available to download here

Source: https://virtualg.uk/